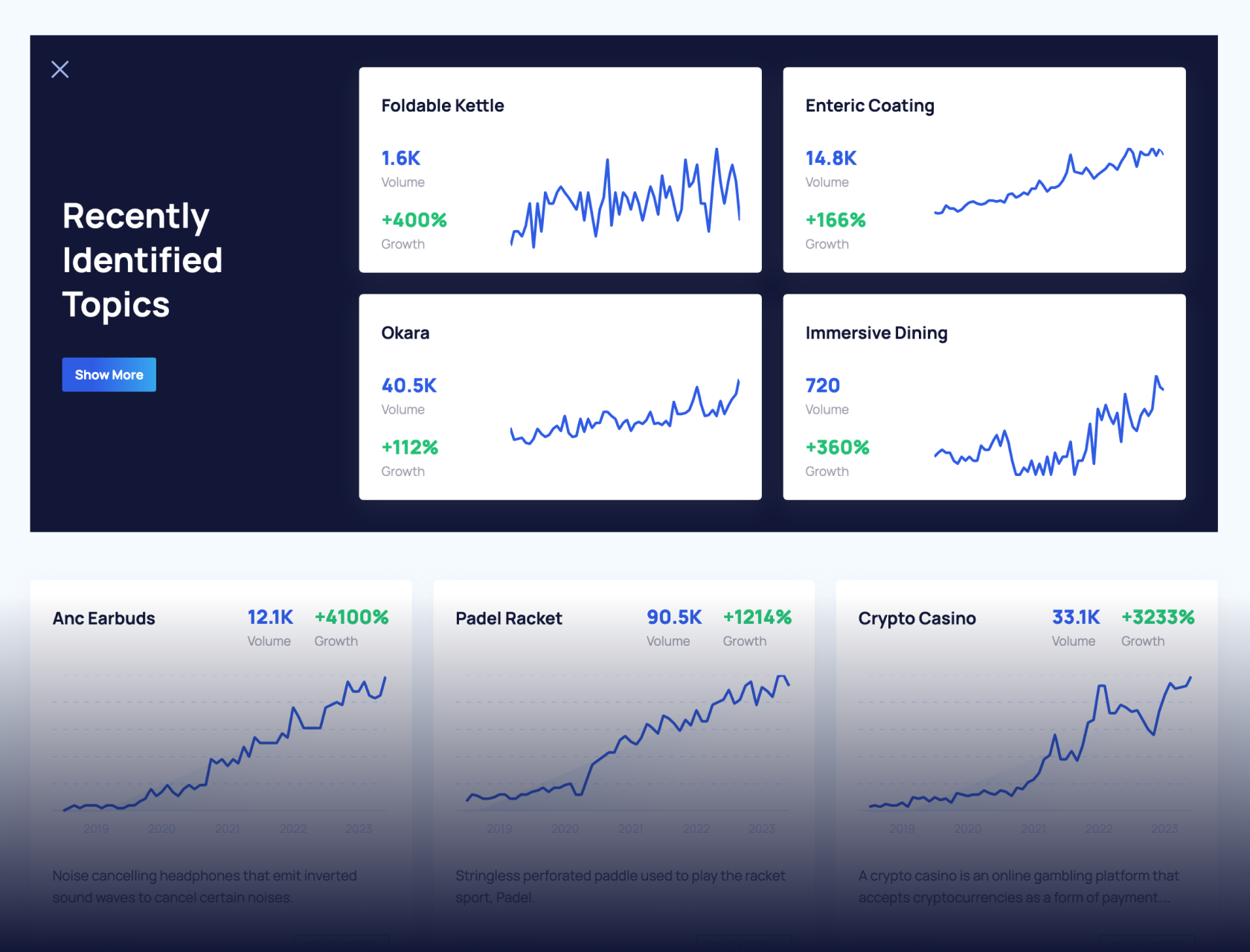

Get Advanced Insights on Any Topic

Discover Trends 12+ Months Before Everyone Else

How We Find Trends Before They Take Off

Exploding Topics’ advanced algorithm monitors millions of unstructured data points to spot trends early on.

Keyword Research

Performance Tracking

Competitor Intelligence

Fix Your Site’s SEO Issues in 30 Seconds

Find technical issues blocking search visibility. Get prioritized, actionable fixes in seconds.

Powered by data from

Latest Blog Posts

Featured Case Studies

See what's trending before everyone else

Each week, we'll send you our best Exploding Topics. Plus, expert insight and analysis.

Top 50+ Large Language Models (LLMs) in 2026

Large language models are pre-trained on large datasets and use natural language processing to perform linguistic tasks such as text generation, code completion, paraphrasing, and more.

The initial release of ChatGPT sparked the rapid adoption of generative AI, which has led to large language model innovations and industry growth.

List of LLMs (Updated)

This table lists the leading large language models in 2026.

| LLM Name | Developer | Release Date | Access | Parameters |

| Gemini 3.1 Pro | Google DeepMind | Feb 19, 2026 | API | Unknown |

| Claude Sonnet 4.6 | Anthropic | Feb 17, 2026 | API | Unknown |

| Claude Opus 4.6 | Anthropic | Feb 5, 2026 | API | Unknown |

| Gemini 3 Flash | Google DeepMind | Dec 17, 2025 | API | Unknown |

| Nemotron 3 | Nvidia | Dec 15, 2025 | Open Source | Nano 30B, Super 100B, Ultra 500B |

| GPT-5.2 | OpenAI | Dec 11, 2025 | API | Unknown |

| Mistral Large 3 | Mistral AI | Dec 2, 2025 | API, Open Source | 41B active (MoE) |

| DeepSeek-V3.2 | DeepSeek | Dec 1, 2025 | API, Open Source | Unknown |

| Claude Opus 4.5 | Anthropic | Nov 24, 2025 | API | Unknown |

| Grok 4.1 | xAI | Nov 17, 2025 | API | Unknown |

| Gemini 3 Pro | Google DeepMind | Nov 18, 2025 | API | Unknown |

| GPT-5.1 | OpenAI | Nov 12, 2025 | API | Unknown |

| Claude Sonnet 4.5 | Anthropic | Sep 29, 2025 | API | Unknown |

| DeepSeek-V3.1 | DeepSeek | Aug 2025 | API, Open Source | Unknown |

| GPT-5 | OpenAI | August 7, 2025 | API | Unknown |

| Claude 4.1 | Anthropic | August 5, 2025 | API | Unknown |

| Grok 4 | xAI | July 9, 2025 | API | Unknown |

| Claude Sonnet 4 | Anthropic | May 22, 2025 | API | Unknown |

| Claude Opus 4 | Anthropic | May 22, 2025 | API | Unknown |

| Qwen 3 | Alibaba | April 29, 2025 | API, Open Source | 235B |

| GPT-o4-mini | OpenAI | April 16, 2025 | API | Unknown |

| GPT-o3 | OpenAI | April 16, 2025 | API | Unknown |

| GPT-4.1 | OpenAI | April 14, 2025 | API | Unknown |

| Llama 4 Scout | Meta AI | April 5, 2025 | API | 17B |

| Llama 4 Maverick | Meta AI | April 5, 2025 | Open Source | 400B (17B active, MoE) |

| Gemini 2.5 Pro | Google DeepMind | Mar 25, 2025 | API | Unknown |

| GPT-4.5 | OpenAI | Feb 27, 2025 | API | Unknown |

| Claude 3.7 Sonnet | Anthropic | Feb 24, 2025 | API | Unknown (est. 200B+) |

| Grok-3 | xAI | Feb 17, 2025 | API | Unknown |

| Gemini 2.0 Flash-Lite | Google DeepMind | Feb 5, 2025 | API | Unknown |

| Gemini 2.0 Pro | Google DeepMind | Feb 5, 2025 | API | Unknown |

| GPT-o3-mini | OpenAI | Jan 31, 2025 | API | Unknown |

| Qwen 2.5-Max | Alibaba | Jan 29, 2025 | API | Unknown |

| DeepSeek R1 | DeepSeek | Jan 20, 2025 | API, Open Source | 671B (37B active) |

| DeepSeek-V3 | DeepSeek | Dec 26, 2024 | API, Open Source | 671B (37B active) |

| Gemini 2.0 Flash | Google DeepMind | Dec 11, 2024 | API | Unknown |

| Nova | Amazon | Dec 3, 2024 | API | Unknown |

| Claude 3.5 Sonnet | Anthropic | Oct 22, 2024 | API | Unknown |

| GPT-o1 | OpenAI | Sept 12, 2024 | API | Unknown (o1-mini est. ~100B) |

| DeepSeek-V2.5 | DeepSeek | Sept 5, 2024 | API, Open Source | Unknown |

| Grok-2 | xAI | Aug 13, 2024 | API | Unknown |

| Mistral Large 2 | Mistral AI | July 24, 2024 | API | 123B |

| Llama 3.1 | Meta AI | July 23, 2024 | Open Source | 405B |

| GPT-4o mini | OpenAI | July 18, 2024 | API | ~8B (est.) |

| Nemotron-4 | Nvidia | July 14, 2024 | Open Source | 340B |

| Claude 3.5 Sonnet | Anthropic | June 20, 2024 | API | ~175-200B (est.) |

| GPT-4o | OpenAI | May 13, 2024 | API | ~1.8T (est.) |

| DeepSeek-V2 | DeepSeek | May 6, 2024 | API, Open Source | Unknown |

| Phi-3 | Microsoft | April 23, 2024 | API, Open Source | Mini 3B, Small 7B, Medium 14B |

| Mixtral 8x22B | Mistral AI | April 10, 2024 | Open Source | 141B (39B active) |

| Jamba | AI21 Labs | Mar 29, 2024 | Open Source | 52B (12B active) |

| DBRX | Databricks' Mosaic ML | Mar 27, 2024 | Open Source | 132B |

| Command R | Cohere | Mar 11, 2024 | API, Open Source | 35B |

| Inflection-2.5 | Inflection AI | Mar 7, 2024 | Proprietary | Unknown (predecessor ~400B) |

| Gemma | Google DeepMind | Feb 21, 2024 | API, Open Source | 2B, 7B |

| Gemini 1.5 | Google DeepMind | Feb 15, 2024 | API | ~1.5T Pro, ~8B Flash (est.) |

| Stable LM 2 | Stability AI | Jan 19, 2024 | Open Source | 1.6B, 12B |

| Grok-1 | xAI | Nov 4, 2023 | API, Open Source | 314 billion |

| Mistral 7B | Mistral AI | Sept 27, 2023 | Open Source | 7.3 billion |

| Falcon 180B | Technology Innovation Institute | Sept 6, 2023 | Open Source | 180 billion |

| XGen-7B | Salesforce | July 3, 2023 | Open Source | 7 billion |

| PaLM 2 | May 10, 2023 | API | 340 billion | |

| Alpaca 7B | Stanford CRFM | Mar 13, 2023 | Open Source | 7 billion |

| Pythia | EleutherAI | Mar 13, 2023 | Open Source | 70 million to 12 billion |

Context Windows and Knowledge Boundaries

LLMs with a larger context window size can handle longer inputs and outputs. The context window, therefore, determines how much information an LLM processes before its performance starts to degrade. The knowledge cutoff date determines the end date of the data used in training.

(It's worth noting that context windows are not the be-all-and-end-all of LLMs. Practicing context engineering on models with smaller windows can even produce better results.)

| LLM Name | Context Window (Tokens) | Knowledge Cutoff Date | Release Date |

| Llama 4 Scout | 10,000,000 | August 2024 | Apr 2025 |

| Grok 4.1 | 2,000,000 | November 2024 | Late 2025 |

| Gemini 3.1 Pro | 1,000,000 | January 2025 | Feb 2026 |

| Gemini 3 Pro | 1,000,000 | January 2025 | Nov 2025 |

| Gemini 3 Flash | 1,000,000 | January 2025 | Late 2025 |

| Llama 4 Maverick | 1,000,000 | August 2024 | Apr 2025 |

| Claude Sonnet 4 | 1,000,000 (upgraded from 200K) | March 2025 | May 2025 |

| Gemini 2.5 Flash | 1,000,000 | January 2025 | 2025 |

| GPT-5.2 (Instant/Thinking/Pro) | 400,000 | August 2025 | Dec 2025 |

| GPT-5.1 | 400,000 | September 2024 | Nov 2025 |

| GPT-5 | 400,000 | September 2024 | Aug 2025 |

| Grok 4 | 256,000 | November 2024 | Jul 2025 |

| Claude 4.6 Opus | 200,000 | August 2025 (training) / May 2025 (reliable) | Feb 2026 |

| Claude 4.6 Sonnet | 200,000 | January 2026 (training) / August 2025 (reliable) | |

| Claude 4.5 Opus | 200,000 | August 2025 (training) / May 2025 (reliable) | Nov 2025 |

| Claude 4.5 Sonnet | 200,000 | July 2025 (training) / January 2025 (reliable) | Sep 2025 |

| Claude 4.5 Haiku | 200,000 | July 2025 (training) / February 2025 (reliable) | Oct 2025 |

| Claude 4 Opus | 200,000 | March 2025 | May 2025 |

| Kimi K2 | 1,000,000,000 (1T MoE) | ~Mid-2025 | Jul 2025 |

| DeepSeek-V3-0324 | 128,000 | ~Early 2025 | Mar 2025 |

| DeepSeek R1 | 131,072 | January 2025 | Jan 2025 |

| Qwen 3 (235B-A22B) | 128,000 | Unknown | Apr 2025 |

| GPT-4.1 | 1,047,576 | June 2024 | Apr 2025 |

| GPT-o3 | 200,000 | June 2024 | Jan 2025 |

| GPT-o4-mini | 200,000 | June 2024 | Apr 2025 |

| Gemini 2.5 Flash-Lite | 1,000,000 | January 2025 | 2025 |

| Grok 3 | 131,072 | November 2024 | Feb 2025 |

As adoption continues to grow, so does the LLM industry.

- The global large language model market is projected to grow from $6.5 billion in 2024 to $140.8 billion by 2033

- 92% of Fortune 500 firms have started using generative AI in their workflows

- Generative AI is disrupting the SEO industry and changing the way we find information online

- LLM search will drive 75% of search revenue by 2028

Here's a deeper dive on some of the most important models over the last 3 years.

1. GPT-5.2

Developer: OpenAI

Release date: December 2025

Number of Parameters: Unknown

Context Window (Tokens): 400,000

Knowledge Cutoff Date: August 2025

What is it? GPT-5.2 is an iteration of the is the largest OpenAI model to date: GPT-5. In benchmarking, it outperforms earlier OpenAI models in most tests.

The ChatGPT website continues to be one of the world's most popular sites, receiving more than 5.5 billion visitors from organic search in February 2026.

Unlike earlier models that relied solely on unsupervised learning, GPT-5.2 incorporates advanced multimodal and reasoning capabilities, enabling more accurate and context-aware interactions.

It also has superior agentic capabilities and tool-calling than earlier versions. The GPT-5 lineup hallucinates less than older models, but some benchmarks show GPT-5.2 has a higher hallucination rate at 39% than GPT-5 (18%).

OpenAI has positioned GPT-5 as a major step forward, offering improved performance in tasks requiring logic, planning, and real-time understanding.

Pro users began gaining access to GPT-5 in mid-August 2025. Access will expand to Team, Enterprise, and Education users in early September.

ChatGPT is an important platform for marketing as well. As one of the first major LLMs, it has one of the largest base of users.

This is why companies want to boost AI visibility of their products and brand in ChatGPT to win voice of share and discovery opportunities in AI-powered search.

You can benchmark your brand performance in AI search by using this free AI visibility checker.

2. Gemini 3.1 Pro

Developer: Google DeepMind

Release date: February 19, 2026

Number of Parameters: Unknown

Context Window (Tokens): 1,000,000

Knowledge Cutoff Date: January 2025

What is it? Gemini 3.1 offer is the latest Gemini model, offering a one million-token context window on release.

This model has outstanding reasoning performance, scoring 77.1% on a test that measures the ability of an AI recognize novel patterns not fed during training. The second best model is Claude Opus 4.6 when measured on this benchmark, but it scores only 68.8%, putting Gemini well ahead of all competition right now.

With Nano Bano also rolled in for image generation and editing, the current iteration of Gemini stands as one of the most versatile AI tools with all-around strong capabilities.

Gemini also boasts stunning SVG animations that run directly in the chat interface. It's one of differentiator that's not yet available to the same level in competitor tools yet.

3. DeepSeek-V3.2

Developer: DeepSeek

Release date: December 1, 2025

Number of Parameters: 685B total (MoE)

Context Window (Tokens): 163,840 (input) / 65,536 (output)

Knowledge Cutoff Date: Unknown (estimated mid-2025)

What is it? DeepSeek-V3.2 is a reasoning model that excels in math and coding. DeepSeek's earliest models outperformed mainstread models like OpenAI o1 at first launch.

However, models like GPT-5.2, Claude Opus 3.6, and Gemini 3.1 and beyond have surpassed DeepSeek in several intelligence benchmarks.

(We've done a thorough DeepSeek vs ChatGPT comparison, where we put the R1 model to the test.)

That said, there's one area where DeepSeek still outshines its competitors: handling massive context windows far more cheaply.

DeepSeek V3.2 costs $0.25 per million tokens for input and $0.40 per million tokens for output. It's only a fraction of what Google, OpenAI, or Anthropic charge for comparable performance .

This is critical advantage for enterprise deployment.

Another notable feature is DeepSeek's tool-use abilities which integrates with both thinking and non-thinking modes. The ability to think and reason while using external tools makes DeepSeek a formidable competitor in the AI space.

Prominently, DeepSeek also has lower hallucination rates than leading LLMs like Gemini 3.1 and Claude Opus 4.6

On its release, DeepSeek immediately hit headlines due to the low cost of training compared to most major LLMs. Traffic to the DeepSeek website exploded in early 2025.

According to Semrush, DeepSeek gets over 262 million visits per month from more than 42.9 million unique visitors.

4. Claude 4.6 Opus

Developer: Anthropic

Release date: February 5, 2026

Number of Parameters: Unknown (Anthropic does not disclose parameter counts)

Context Window (Tokens): 200,000 (1,000,000 in beta) / 128,000 max output

Knowledge Cutoff Date: May 2025 (reliable) / August 2025 (training data)

What is it? Claude Opus 4.6 is Anthropic's most intelligent model. Anthropic released 4.6 only a few months after Opus 4.5, seeking to expand features of the 4.5 model.

On the web, it receives 219.9+ visits from organic search each month.

Claude remains a popular model for creative tasks and coding.

Since Claude was the first LLM to introduce MCP, it became a popular choice for developers, designers, and marketers.

There's a good number of tools that support MCPs now, so marketers can have Claude perform keyword research, backlink analysis, and other SEO tasks within the chat interface.

The latest upgrade in the flagship Opus line of models is adaptive thinking.

Simply put, Claude has the ability to dynamically decide the amount of thinking effort it should put in on a task in order to maximize speed, results, and computational efficiency.

Opus 4.6 introduces agent teams, a system where multiple AI agents specializing in different tasks handle different parts of a problem in team-work style.

Each agent gets its own context window (up to 1 million tokens), and they can communicate peer-to-peer through the "Mailbox Protocol".

For complex tasks (coding, math, data analysis etc.) requiring deep context knowledge work, Claude 4.6 Opus is right up there with the best LLM tools.

5. Grok-4

Developer: xAI

Release date: July 9, 2025

Number of Parameters: Unknown (Grok-1: 314 billion)

Context Window (Tokens): 256,000

Knowledge Cutoff Date: None (uses real-time information)

What is it? Grok-4 is the newest flagship model from xAI, building on the capabilities of Grok-3 with major improvements in reasoning, speed, and real-time awareness. It’s fully integrated into X (formerly Twitter) for Premium+ subscribers.

As of launch, Grok now serves 42.7 million active users, with daily visits averaging 6.85 million since Grok-4 became available.

6. Mistral Large 2

Developer: Mistral AI

Release date: December 2, 2025

Number of Parameters: 675 billion total / 41 billion active (Sparse MoE)

Context Window (Tokens): 256,000

Knowledge Cutoff Date: Unknown

What is it? Mistral Large 3 uses a mixture-of-experts model with an impressive context window size. While it doesn't measure up to the reasoning and coding capabilities of LLMs like GPT-5.2, Claude 4.6, Gemini 3.1, and DeepSeek, it's a powerful general model with impressive multi-lingual performance.

Mistral's main utility comes from its open-source nature and its ability to be self-hosted. That, combined with its token efficiency makes Mistral a good enterprise-level LLM even if it lags behind GPT and Claude in reasoning tasks.

Want to Spy on Your Competition?

Explore competitors’ website traffic stats, discover growth points, and expand your market share.

It's free

7. Falcon 3

Developer: Technology Innovation Institute (TII)

Release date: December 17, 2024

Number of Parameters: 10 billion (largest variant); family includes 1B, 3B, 7B, and 10B models

Context Window (Tokens): 32,768

Knowledge Cutoff Date: Unknown

What is it? Falcon 3 is a smart LLM that reflects where the open-source AI ecosystem is heading, with a focus on small, efficient, and accessible range of AI models.

It's not a match for leading models like Claude, GPT, and Gemini, since it's a fairly small model.

That said Falcon 3-10B outperforms some Llama variants in Hugging Face leaderboard.

In February 2024, the UAE-based Technology Innovation Institute (TII) committed $300 million in funding to the Falcon Foundation.

8. Llama 4

Developer: Meta AI

Release date: April 5, 2025

Number of Parameters: 109 billion total / 17 billion active (Scout); 400 billion total / 17 billion active (Maverick)

Context Window (Tokens): 10,000,000 (Scout); 1,000,000 (Maverick)

Knowledge Cutoff Date: August 2024

What is it? Llama 4 is another mixture-of-models LLMs consisting of Llama 4 Scout (~109B total) abd Llama 4 Maverick (~400B total). Meta has also expanded its multilingual capabilities, adding support for eight more languages. This model now stands as the largest open-source release from Meta to date.

That said, there was significant controversy where Llama 4 Maverick benchmark results were discovered to have been manipulated by Meta to exaggerate its performance.

With independent testing revealing that Llama 4 performed worse than several models that were already months old at the time of Llama 4's release, Meta delayed the release of LLama 4 Behemoth that still hasn't been made publicly available.

The substandard results of Meta Llama make it suitable for casual tasks at best and not the best tool for jobs involving technical coding, development, and analysis tasks.

9. Inflection-3.0

Developer: Inflection AI

Release date: March 7, 2024

Number of Parameters: Unknown

Context Window (Tokens): 32,768

Knowledge Cutoff Date: Mid 2023

What is it? Inflection-2.5 was developed by Inflection AI to power its conversational AI assistant, Pi. Significant upgrades have been made, as the model currently achieves over 94% of GPT-4’s average performance while only having 40% of the training FLOPs.

Pi differentiates itself by being an empathetic AI. It doesn't measure up to flagship AI tools in technicals tasks, focusing instead on being an emotional support and displaying human-like kindness and diplomacy in its responses.

However, the company has since pivoted from its user-centric AI chatbot and is now prioritizing enterprise use.

The Microsoft-backed startup reached 1+ million daily active users on Pi in 2021, Q1.

10. Jamba

Developer: AI21 Labs

Release date: March 6, 2025 (Jamba 1.6); October 8, 2025 (Jamba Reasoning 3B); January 2026 (Jamba 2 Mini)

Number of Parameters: 398B total / 94B active (Large); 52B total / 12B active (Mini); 3B (Reasoning 3B) — all MoE

Context Window (Tokens): 256,000

Knowledge Cutoff Date: Early March 2024 (Jamba 1.5 Mini confirmed); newer versions likely later 2024

What is it? AI21 Labs created Jamba, the world's first production-grade Mamba-style large language model. It integrates SSM technology with elements of a traditional transformer model to create a hybrid architecture. The model is efficient and highly scalable, with a context window of 256K and deployment support of 140K context on a single GPU.

Jamba's core competency is maintaining high speed and efficiency when processing answers with long contexts.

However, benchmarks show that Jamba is one of the least intelligent LLMs, especially for today's standards. And while it is faster than some counterparts like DeepSeek, Claude, and Grok, its low intelligence leaves it behind leading LLMs, more so considering that it's not the cheapest either.

11. Command A

Developer: Cohere

Release date: March 13, 2025

Number of Parameters: 111 billion (Command A)

Context Window (Tokens): 256,000 (Command A)

Knowledge Cutoff Date: Unknown

What is it? Command A is a series of scalable LLMs from Cohere that support ten languages and 256,000-context length. This model primarily excels at retrieval-augmented generation for enterprise use.

Cohere has moved from the Command R era to Command A and its family (Reasoning, Vision, Translate) in a single year. In doing so, it doubled the context window to 256K and achieved superior inference efficiency.

They're one of the few companies building an enterprise stack (North + Embed + Rerank + Command) rather than just shipping a model.

12. Gemma 3

Developer: Google DeepMind

Release date: August 14, 2025 (Gemma 3 270M)

Number of Parameters: 270M, 1B, 4B, 12B, and 27B (Gemma 3)

Context Window (Tokens): 128,000 (Gemma 3, 4B and above); 32,000 (Gemma 3 1B & 270M; Gemma 3n)

Knowledge Cutoff Date: Unknown

What is it? Gemma is a series of lightweight open-source language models developed and released by Google DeepMind. The Gemma models are built with similar tech to the Gemini models, but Gemma is limited to text inputs and outputs only.

It doesn't compete with frontier closed models on factual accuracy or hard reasoning, but for the open-source / local-deployment community, Gemma 3 is one of the most versatile and well-supported options available.

13. Phi-4

Release date: December 12, 2024 (Phi-4 base); February 26, 2025 (Phi-4-mini & Phi-4-multimodal); April 30, 2025 (Phi-4-reasoning family); March 4, 2026 (Phi-4-reasoning-vision)

Number of Parameters: 3.8B (Phi-4-mini), 5.6B (Phi-4-multimodal), 14B (Phi-4 base / reasoning / reasoning-plus), 15B (Phi-4-reasoning-vision)

Context Window (Tokens): 128,000

Knowledge Cutoff Date: June 2024 (Phi-4-multimodal); February 2025 (Phi-4-mini-reasoning)

What is it? Classified as a small language model (SLM), Phi-4 is Microsoft's latest release with 3.8 billion parameters. Despite the smaller size, it's been trained on 3.3 trillion tokens of data to compete with Mistral 8x7B and GPT-3.5 performance on MT-bench and MMLU benchmarks.

These models are fundamentally limited by size for certain tasks. They simply don't have the capacity to store too much factual knowledge, so users may experience factual incorrectness.

Microsoft has only just very recently released Phi-4-reasoning-vision-15B. This model combines vision understanding with structured reasoning.

In fact, it uses a mixed reasoning/non-reasoning approach, switching automatically depending on the nature of task. For perception-based problems (OCR, captioning etc.), it uses direct inference.

When a scientific or math problem is given, the models applies chain-of-thought reasoning for more thoughtful responses.

14. XGen

Developer: Salesforce AI Research

Release date: May 2, 2025 (xGen-small); April 2025 (xLAM-2 series)

Number of Parameters: 4B–9B (xGen-small); 1B–70B (xLAM-2 series)

Context Window (Tokens): 128,000 (xGen-small); 32,000–128,000 (xLAM)

Knowledge Cutoff Date: Unknown (estimated mid-2025)

What is it? XGen series include a small model as well as a Large Action Model (LAM).

LAMs are specialized, compact language models that focus on predict the next action rather than the next word like traditional LLMs do.

They're purpose-built for AI agents that can trigger workflows, call functions, and execute tasks autonomously.

While these models aren't in competition with the top LLMs, it's part of Salesforce design philosophy to keep these models powerful in narrow applications like agentic workflows for enterprises.

15. DBRX

Developer: Databricks' Mosaic ML

Release date: March 27, 2024

Number of Parameters: 132 billion

Context Window (Tokens): 32,768

Knowledge Cutoff Date: December 2023

DBRX has now been retired and no longer receives any updates.

What is it? DBRX is an open-source LLM built by Databricks and the Mosaic ML research team. The mixture-of-experts architecture has 36 billion (of 132 billion total) active parameters on an input. DBRX has 16 experts and chooses 4 of them during inference, providing 65 times more expert combinations compared to similar models like Mixtral and Grok-1.

16. Pythia

Developer: EleutherAI

Release date: February 13, 2023

Number of Parameters: 70 million to 12 billion

Context Window (Tokens): 2,048

Knowledge Cutoff Date: Mid 2022

What is it? Pythia is a series of 16 large language models developed and released by EleutherAI, a non-profit AI research lab. There are eight different model sizes: 70M, 160M, 410M, 1B, 1.4B, 2.8B, 6.9B, and 12B. Because of Pythia's open-source license, these LLMs serve as a base model for fine-tuned, instruction-following LLMs like Dolly 2.0 by Databricks.

17. Alpaca 7B

Developer: Stanford CRFM

Release date: March 27, 2024

Number of Parameters: 7 billion

Context Window (Tokens): 32,768

Knowledge Cutoff Date: Unknown

Defunct as a model. No successor model released.

What is it? Alpaca is a 7 billion-parameter language model developed by a Stanford research team and fine-tuned from Meta's LLaMA 7B model. Users will notice that, although being much smaller, Alpaca performs similarly to text-DaVinci-003 (ChatGPT 3.5). However, Alpaca 7B is available for research purposes, and no commercial licenses are available.

18. Nemotron-3

Developer: NVIDIA

Release date: December 15, 2025

Number of Parameters: 3.6B active (Nemotron 3 Nano); ~100B and ~500B (Nemotron 3 Super/Ultra, upcoming)

Context Window (Tokens): 128,000 (Llama Nemotron, Nemotron Nano 2) / 1,000,000 (Nemotron 3)

Knowledge Cutoff Date: Mid-2025

What is it? The Nemotron 3 models introduce a breakthrough hybrid latent mixture-of-experts method, featuring a native 1M-token context window.

On some benchmarks, the Nemotron 3 family is more accurate than GPT-OSS-20B and Qwen3-30B-A3B.

Nemotron goes beyond language models and includes an ecosystem capable of reasoning, vision, speech, RAG models for document retrieval, and safety models for real-time content filtering.

19. PaLM 2

Developer: Google

Release date: May 10, 2023

Number of Parameters: 340 billion

Context Window (Tokens): 8,192

Knowledge Cutoff Date: February 2023

PaLM 2 has been decommissioned was originally used to power Google's first generative AI chatbot, Bard (rebranded to Gemini in February 2024).

What is it? PaLM 2 is an advanced large language model developed by Google. As the successor to the original Pathways Language Model (PaLM), it’s trained on 3.6 trillion tokens (compared to 780 billion) and 340 billion parameters (compared to 540 billion).

Wrapping Up

New breakthroughs and innovations are emerging at an unprecedented pace.

We will keep this list regularly updated with new models. If you liked learning about these LLMs, check out our lists of generative AI startups and AI startups.

Stop Guessing, Start Growing 🚀

Use real-time topic data to create content that resonates and brings results.

Exploding Topics is owned by Semrush. Our mission is to provide accurate data and expert insights on emerging trends. Unless otherwise noted, this page’s content was written by either an employee or a paid contractor of Semrush Inc.

Share

Newsletter Signup

By clicking “Subscribe” you agree to Semrush Privacy Policy and consent to Semrush using your contact data for newsletter purposes

Written By

Anthony is a Content Writer at Exploding Topics. Before joining the team, Anthony spent over four years managing content strat... Read more